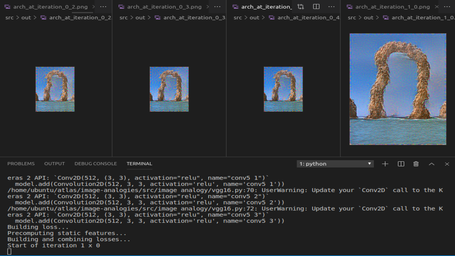

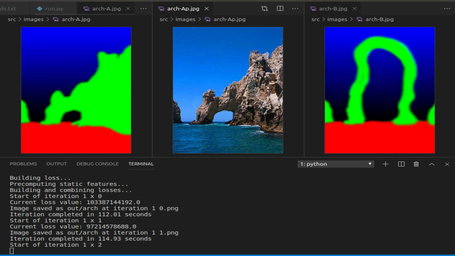

Screenshots

NEURAL STYLE TRANSFER FOR MAKING ANALOGOUS IMAGES

WHAT IS IT?

Neural style transfer is a machine learning technique that merges the “content” of one image with the “style” of another. Here it is based on the implementation of paper image analogies.

HOW TO USE?

Before running this script, download the weights for the VGG16 model. This file contains only the convolutional layers of VGG16 which is 10% of the full size. Original source of full weights. The script assumes the weights are in the current working directory. If you place them somewhere else make sure to pass the --vgg-weights=<location-of-the-weights.h5 parameter> or set the VGG_WEIGHT_PATH environment variable.

Example script usage:

python run.py images/arch-mask.jpg images/arch.jpg images/arch-newmask.jpg out/arch

For Example:

python run.py images/arch-A.jpg images/arch-Ap.jpg images/arch-B.jpg out/arch

For Help Options - python run.py -h

usage: run.py [-h] [--width OUT_WIDTH] [--height OUT_HEIGHT] [--scales NUM_SCALES] [--min-scale MIN_SCALE] [--a-scale-mode A_SCALE_MODE] [--output-full] [--iters NUM_ITERATIONS_PER_SCALE] [--model MATCH_MODEL] [--mrf-nnf-steps MRF_NNF_STEPS] [--randomize-mrf-nnf] [--analogy-nnf-steps ANALOGY_NNF_STEPS] [--tv-w TV_WEIGHT] [--analogy-w ANALOGY_WEIGHT] [--analogy-layers ANALOGY_LAYERS] [--use-full-analogy] [--mrf-w MRF_WEIGHT] [--mrf-layers MRF_LAYERS] [--b-content-w B_BP_CONTENT_WEIGHT] [--content-layers B_CONTENT_LAYERS] [--nstyle-w NEURAL_STYLE_WEIGHT] [--nstyle-layers NEURAL_STYLE_LAYERS] [--patch-size PATCH_SIZE] [--patch-stride PATCH_STRIDE] [--vgg-weights VGG_WEIGHTS] [--pool-mode POOL_MODE] [--jitter JITTER] [--color-jitter COLOR_JITTER] [--contrast CONTRAST_PERCENT]

Neural image analogies with Keras.

positional arguments:

ref Path to the reference image mask (A) base Path to the source image (A') ref Path to the new mask for generation (B) res_prefix Prefix for the saved results (B') Optional arguments: -h, --help show this help message and exit --width OUT_WIDTH Set output width --height OUT_HEIGHT Set output height --scales NUM_SCALES Run at N different scales --min-scale MIN_SCALE Smallest scale to iterate --a-scale-mode A_SCALE_MODE Method of scaling A and A' relative to B --a-scale A_SCALE Additional scale factor for A and A' --output-full Output all intermediate images at full size regardless of current scale. --iters NUM_ITERATIONS_PER_SCALE Number of iterations per scale --model MATCH_MODEL Matching algorithm (patchmatch or brute) --mrf-nnf-steps MRF_NNF_STEP Number of patchmatch updates per iteration for local coherence loss. --randomize-mrf-nnf Randomize the local coherence similarity matrix at the start of a new scale instead of scaling it up.

Currently, A and A' must be the same size, the same holds for B and B'. Output size is the same as Image B, unless specified otherwise.

WHAT ARE THE REQUIREMENTS?

To get all the requirements and dependencies installed run the command

For GPU - pip install -r gpu_requirements.txt

For CPU - pip install -r cpu_requirements.txt

User Reviews

0 total ratings

Model has not been reviewed yet.